AI Output Evaluation Strategies

Generative AI tools may produce biased, false, or discriminatory information that does not represent you and/or your beliefs. This kind of information may also not be suitable for academic discussions or meet assignment requirements for students. The only way to spot misleading, biased, or discriminatory information is to assess the output critically. Here are two evaluation strategies to help you navigate AI output.

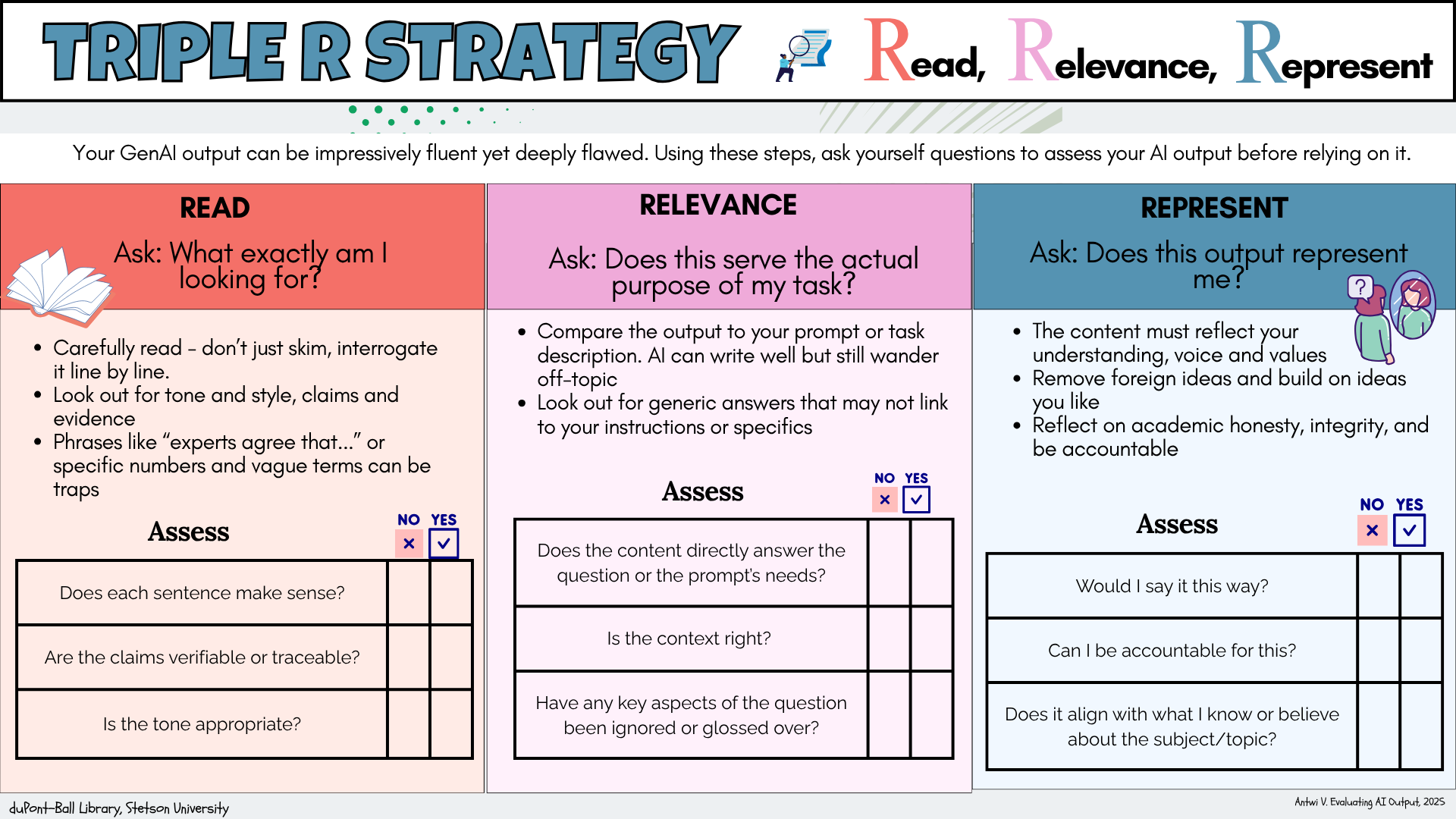

The Triple R

Your GenAI output can be impressively fluent yet deeply flawed. This startegy provides three action items (Read, Relevance, Represent) to understake when evaluating AI output. Using the action items, ask questions and thoughtfully reflect on them to assess your AI output before relying on it.

This strategy reinforces and reminds users of the academic integrity and the need of it when using AI for acdemic works.

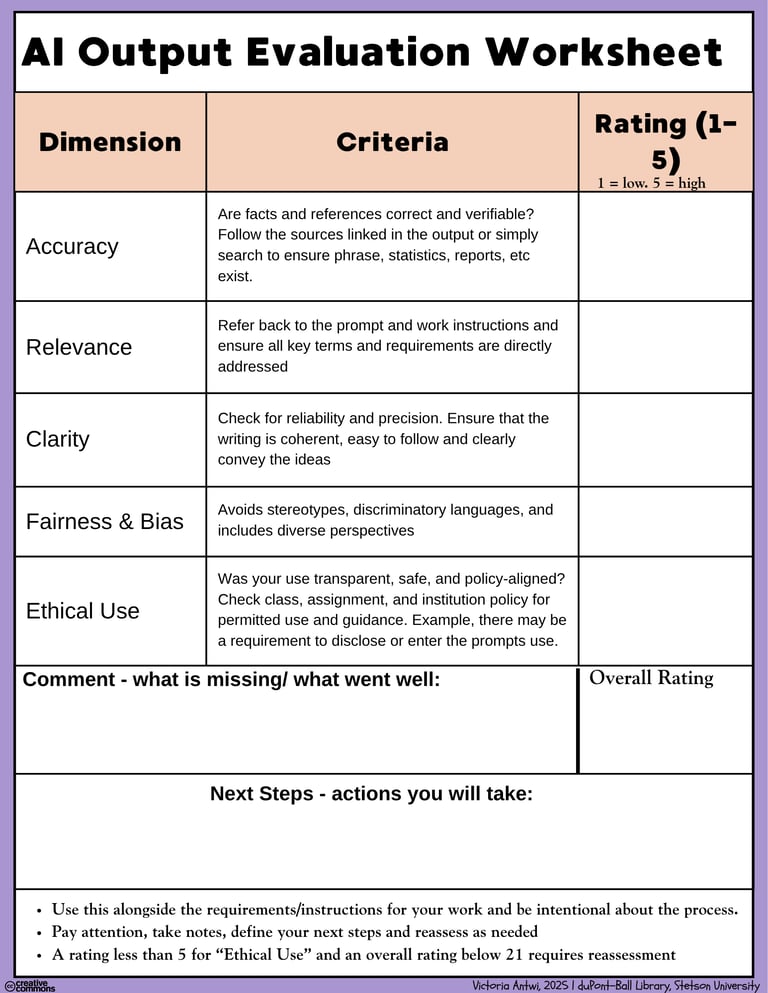

AI Output Evaluation Worksheet

Evaluating AI Output involves checking the quality of the output. This worksheet provides evaluating points to key dimensions to consider when evaluating the quality of AI output.

Thw worksheet presents 5 dimensions along with a criteria or things to look out for when evaluating. Users will rate each dimension after a thoughful assessment. Based on the score for each dimension and the overall score, users will indicate their next line of action.

Reminder: Be intentional about the evaluation process. Pause, reflect, score, and reassess.